& Construction

Integrated BIM tools, including Revit, AutoCAD, and Civil 3D

& Manufacturing

Professional CAD/CAM tools built on Inventor and AutoCAD

Emerging Tech

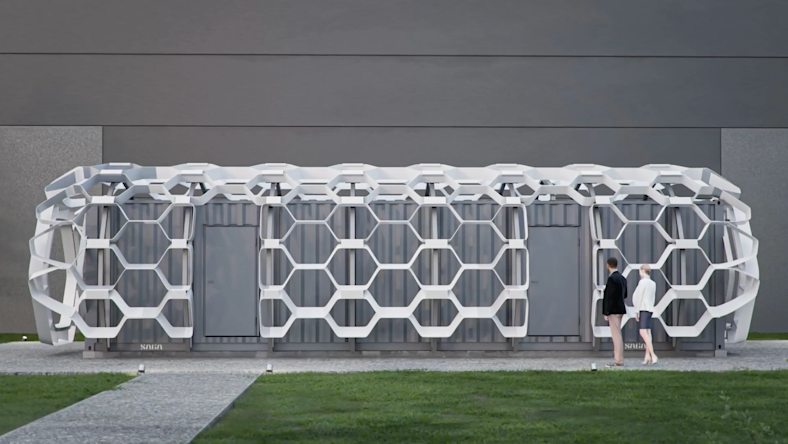

Image courtesy of IDU.

Emerging Tech

Image courtesy of Norconsult.

Emerging Tech

M&E

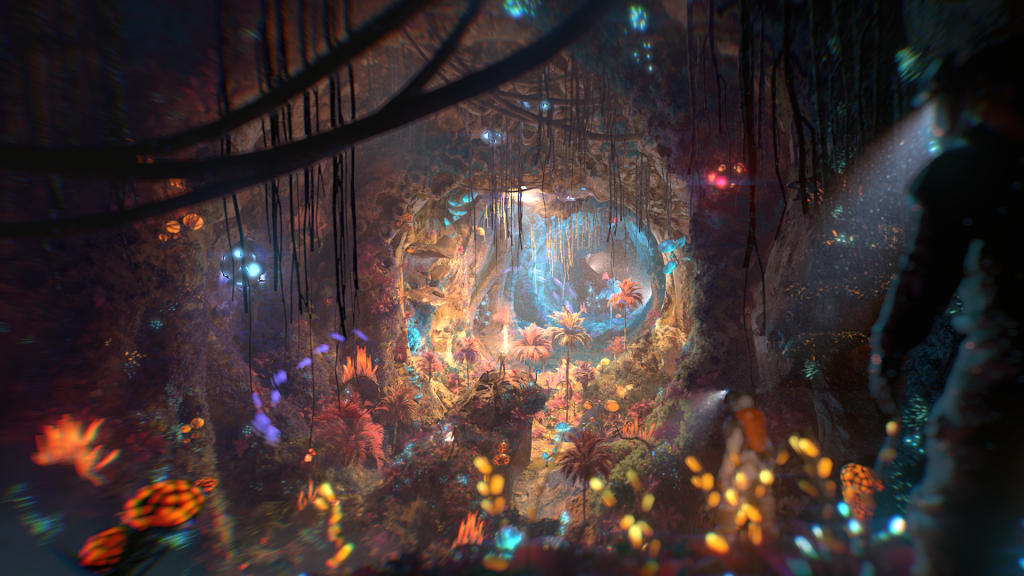

Image courtesy of Kugali Media.

M&E

Image courtesy of Netflix.

AECO

Image courtesy of Kaylon Construction.

AECO

Image courtesy of Nordic Office of Architecture.